Memory Isn't Learning

- Pranav Singh

- Apr 4

- 4 min read

Updated: Apr 15

In my last post I argued that memory in AI agents isn't really a storage problem — it's an interpretation problem. Deciding what something means, whether it matters, and how long it should keep mattering.

That framing helped explain a lot of what I'd seen go wrong in production. But recently, working through a very different use case, I ran into something that memory alone doesn't solve.

Even when a system remembers correctly, it doesn't necessarily improve. It just accumulates more history.

The loop that research agents close

I've been following some of the recent work on autonomous research agents — systems that generate hypotheses, run experiments, evaluate results, and iterate. What's interesting isn't any individual step. It's that the system closes the loop. It does something, observes what happened, and changes what it does next.

That framing stuck with me. Because when you step back and look at most real-world AI systems, that loop is usually missing.

Where I actually ran into this

I've been experimenting with using AI to help my kids with early math — addition, subtraction, the basics. Nothing ambitious. Just working through exercises with them.

On the surface it works fine. The explanations are patient. The examples are reasonable. Any single interaction looks good.

But after a few days, something starts to stand out. My son was consistently struggling with subtraction problems that required borrowing. Not random mistakes — he'd get the simpler ones right. It was specifically that step that kept breaking.

The system could explain the concept again if asked. It could generate more problems. But it didn't seem to notice that this had been happening repeatedly. The fact that borrowing had been a sticking point for several days wasn't shaping what came next.

Each interaction made sense on its own. Over time, nothing changed.

Context isn't the same as learning

You can store everything. You can retrieve relevant pieces. But unless something is actually using that information to update behavior in a structured way, you don't really have learning.

If you think about how a teacher operates with younger kids, the difference becomes clear quickly. They're not just remembering what happened. They're forming a view of the student.

They notice whether a mistake is consistent or occasional. Whether it's tied to a specific concept. Whether something that looked resolved comes back after a gap. And once that pattern becomes clear, they adjust — sometimes immediately, sometimes later, sometimes by doing less rather than more.

Most AI systems today don't really do this. Even with memory, the past mostly informs the current answer. It doesn't reliably shape what happens across sessions. So you end up with systems that respond well, but don't really evolve.

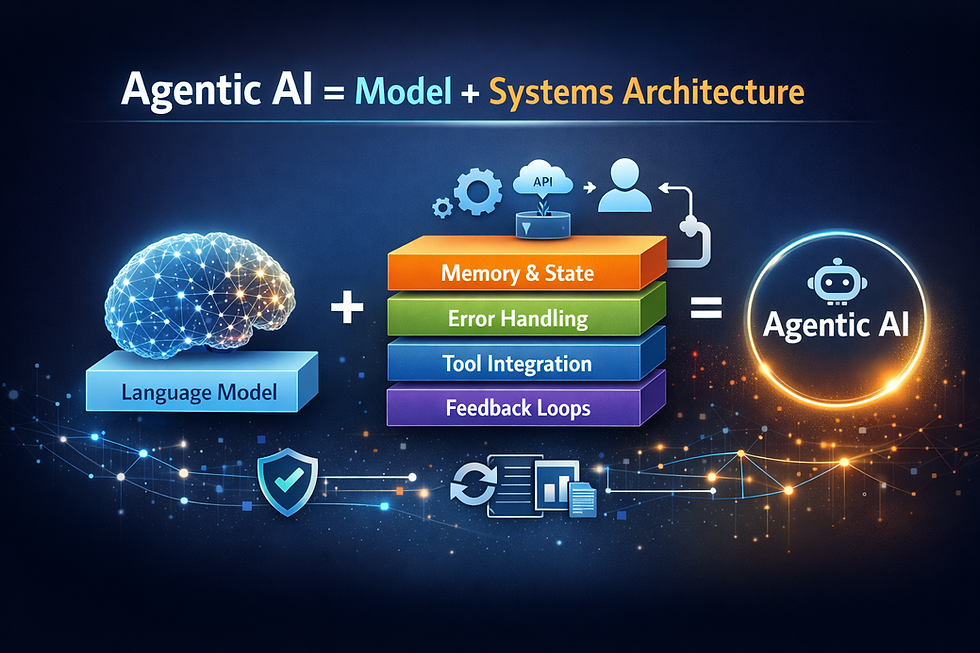

The same systems problem again

You're no longer dealing with stateless requests. You're dealing with something that evolves over time, where past interactions are supposed to influence future behavior in ways that need to be explicit.

If that influence is implicit — just logs sitting somewhere — the system remembers but doesn't change.

What you actually need starts to look more like a maintained state about the person. Not what they said, but what you now believe about where they are. What they understand, where they're inconsistent, what should be revisited, and when. That's a structurally different problem than retrieval.

What a minimal version looks like

I started with something deliberately small. Just tracking a few things: what problems were attempted, which ones were correct, whether the same kind of mistake showed up more than once, and when a concept was last practiced.

Even with that, the behavior starts to shift. Errors that look similar at first begin to separate. Missing a borrow step is not the same as a random arithmetic slip. And once you treat those differently, what comes next can be different too. The next set of problems doesn't need to be random.

The timing turned out to matter more than I expected. Some things are better revisited after a gap rather than immediately. A system that only reacts within the current session doesn't have a way to act on that.

The gap isn't in the model

A stronger model helps at the margins, but it doesn't change the structure. You can have a very capable model that still behaves like a stateless system across sessions.

Autonomous research agents are starting to close this loop for machines — systems that improve based on their own outputs. But when it comes to helping humans learn over time, most systems are still operating session by session. LangGraph gives you state machine primitives. LangSmith gives you visibility into what happened. What we don't really have yet is a clean abstraction for a system that continuously interprets interaction signals and updates its model of a specific person.

"Learning loop" is the closest term I have for it. I'm not sure it quite captures the shape of the problem.

What I'm still not sure about

In the small system I've been working with, the loop is simple enough. Log an attempt, interpret it, update a small state model, let that shape what comes next, revisit things later when it makes sense. Even at that scale, it behaves differently — less like a sequence of independent interactions, more like something gradually forming an understanding.

What I don't know is how well this generalizes. In a narrow domain like early math, the structure is manageable. In more complex settings, it's not obvious what the right representation of "understanding" should even look like, or how much of it transfers across different contexts.

That part feels less settled — and probably more interesting.

Comments