What It Takes to Ship an Agent You Can Trust

- Pranav Singh

- Mar 28

- 6 min read

The first time you watch an AI agent demo go right, it’s hard not to think the problem is mostly solved.

The model understands the goal. It calls the right tool. It chains a few steps together. The task completes cleanly.

Then someone tries to run it on a real system, with real users, and the thing that was impressive in the demo becomes quietly unreliable in production. Not broken. Not crashing. Just subtly wrong often enough that you can’t trust it.

I’ve been thinking about why that gap is so persistent, and I don’t think it’s primarily a model capability problem. The demos aren’t lying. They’re showing you a system that works under one specific set of conditions. The production problem is that those conditions are almost never what you actually get.

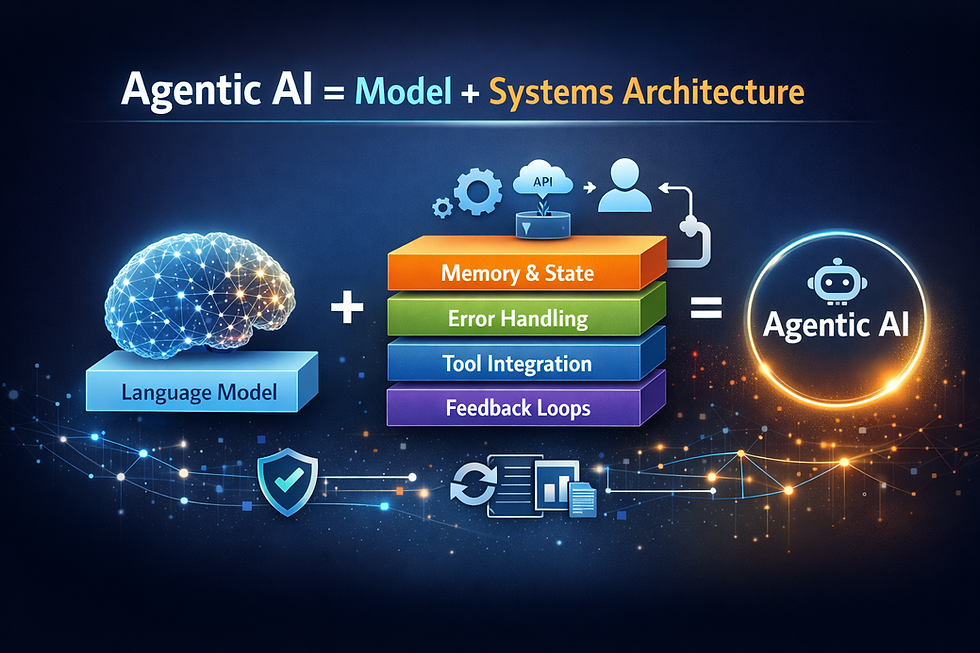

In my previous posts I discussed that agentic AI is quite a systems problem and that memory becomes an interpretation problem under real usage. What I’ve been trying to work out since then is where exactly production agents break down — and whether the current wave of tooling, including things like NemoClaw, actually addresses it.

The happy path is not the system

Most agent demos are built around a clean linear execution:

user input → model → tool → answer

That path works because everything cooperates. The model interprets correctly. The tool responds with clean data. The user doesn’t change their mind halfway through.

In production, that path is the exception, not the baseline.

Tools time out. They return partial results that look valid but aren’t complete enough to act on safely. The model is uncertain but doesn’t flag it. Users ask ambiguous things.

Sometimes a step technically succeeds but doesn’t actually move the task forward.

That sounds obvious when you say it. It’s surprisingly easy to underestimate until you’re staring at it in a production log.

What took me a while to internalize is that demo agents and production agents aren’t just different in scale — they’re built on different assumptions. A demo is designed around what happens when everything works. A production system has to be designed around what happens when it doesn’t.

Tool calling is the easy part

A lot of recent tooling, including NemoClaw, has made tool invocation more structured and predictable. Better schema alignment. More reliable calls. Cleaner outputs. These are real improvements.

But they don’t solve what turns out to be the harder problem: what to do with the result.

In production, tools behave like external systems, not pure functions. They return valid-looking responses that may not actually answer the question. They succeed with stale data. They fail under conditions that are hard to reproduce.

After running into this enough, you stop treating tool outputs like answers and start treating them like signals.

Sometimes you retry with slightly different inputs. Sometimes you take a different approach. Sometimes the right move is to pause and ask the user instead of continuing.

None of this shows up in a demo. It becomes most of the work in production.

What NemoClaw actually solves — and what it doesn’t

NemoClaw, announced at GTC, is a meaningful step forward. It brings something OpenClaw was missing: a real containment layer.

You can define what the agent is allowed to access, what it can call, where it can connect. That’s not a nice-to-have — it’s required for any serious deployment.

The security issues around early OpenClaw usage showed up quickly enough that it became a practical blocker, not just a theoretical concern. NemoClaw addresses that directly.

But it’s solving a different class of problem.

It answers what the agent is allowed to do. It doesn’t answer whether what it’s doing is actually correct.

An agent running in a well-sandboxed environment can still proceed confidently on a partial result. It can still compound a small misinterpretation across multiple steps. It can still complete something that technically satisfies the request while missing what the user actually meant.

The sandbox sees permissions. It doesn’t see reasoning.

NemoClaw is necessary infrastructure. It’s not sufficient for reliability.

The gap that keeps showing up: hesitation

The failure mode I keep coming back to is the system moving forward when it shouldn’t.

There’s just enough signal to continue, but not enough to be right.

In a demo, this looks like progress. In production, it’s how small errors compound into outcomes that feel off, even if nothing clearly broke.

The tricky part is that nothing technically breaks. The agent completes the task. The tool didn’t fail. The model didn’t hallucinate. The system just didn’t pause at the moment it should have — and by the time the user notices, the error has already propagated through several downstream steps.

This isn’t really a capability problem. It’s more of a judgment problem.

Stronger models help, but they don’t remove the need for the system to decide when to act and when to pause. Right now, that behavior tends to be implicit — spread across prompts and bits of logic rather than designed as something explicit.

There are some early attempts in this direction. LangGraph, for example, forces you to think in terms of state transitions instead of linear chains. Reflection-style patterns, where the system evaluates its own intermediate output, can catch certain classes of errors.

They help, but they don’t fully solve it.

I don’t think we have a clean abstraction for this yet.

From chains to loops

Most demo agents are chains. A sequence of steps that executes once and returns a result.

Production systems behave more like loops.

The system acts, observes what happened, adjusts, and tries again if needed.

Once you start thinking this way, a few things shift.

Intermediate validation becomes critical. You need to know whether each step actually did what it was supposed to do, not just whether it completed.

State starts to matter more than you expect. A looping system carries context across attempts, and if that state is messy or implicit, errors start compounding in ways that are hard to trace.

And observability stops being optional. You need to see the full trajectory of a run — not just the final output, but how the system got there.

The systems that feel production-ready are usually the ones designed this way from the beginning, not the ones that added retries after something broke.

Latency is a design constraint

In a demo, a few model calls feel fast enough.

In production, tasks expand.

Model → tool → model → tool → model.

Even if each step is fast, the total adds up.

Most of the meaningful improvements here don’t come from faster models alone. They come from system choices: avoiding unnecessary model calls, running independent steps in parallel, caching results, streaming partial outputs.

In one system, removing a single unnecessary model round-trip had more impact than switching models entirely.

It starts to feel less like performance tuning and more like a design constraint. If you assume every step sits on the critical path, you build differently from the start.

Silent failures are what actually break trust

The hardest failures to deal with aren’t the obvious ones. They’re the ones that look correct.

The agent completes the task. The output is plausible. But it’s built on a slightly wrong assumption from earlier in the process.

Nothing throws an error. Nothing crashes. The system just slowly becomes less trustworthy.

These are hard to detect because they don’t show up in standard logs. You only see them when you look across interactions — when users correct the system, abandon tasks, or keep rephrasing the same request.

That’s also where the gap between infrastructure observability and behavioral observability becomes clear.

Knowing what the agent did — which tools it called, which endpoints it hit — isn’t the same as knowing whether it made good decisions.

Without that second layer, these failures tend to persist longer than they should.

What this means in practice

The patterns that seem to hold up aren’t complicated, but they’re easy to underinvest in.

Treat tool outputs as signals, not answers. The question isn’t just whether a tool succeeded, but whether the result is good enough to act on.

Be deliberate about when the system should pause instead of proceed. If that decision is left implicit, it usually defaults to continuing.

Design the system as a loop if it’s expected to recover. Chains with retries added later tend to behave unpredictably under pressure.

And invest early in understanding behavior, not just infrastructure. Knowing what the system did is useful. Knowing whether it made sense is what actually helps you improve it.

NemoClaw and similar systems give you a strong foundation around security and execution boundaries. That’s important. But the failure modes that actually affect user trust still sit above that layer.

In my previous posts I wrote about memory and about why agents are as much a systems problem as a model problem.

This feels like a continuation of the same idea.

The difficulty isn’t getting an agent to work once. It’s getting it to behave predictably when everything around it is slightly unreliable.

Better models — and better infrastructure — will keep raising the ceiling.

But the gap between demos and production doesn’t really close there.

It probably closes at the system layer, one failure mode at a time.

I’m still not sure we have the right abstractions for that yet.

Comments