Memory in AI Agents: Why Interpretation Matters More Than Storage

- Pranav Singh

- Mar 16

- 6 min read

The first time I shipped memory into a production conversational system, I thought the problem was mostly solved.

Store the conversation. Embed it. Retrieve what’s relevant. Feed it back to the model.

It held up fine until it didn't. A user came back to a conversation the system half-remembered in the wrong way. The retrieval wasn't broken. The model wasn't hallucinating. The embeddings were doing exactly what I told them to do. The system had just decided that something throwaway deserved to be permanent.

That experience — and the many variations of it that followed — eventually made something clear to me: the hard problem of agent memory isn’t storage. It’s interpretation. Deciding what something means, how long it should matter, and whether it should matter at all.

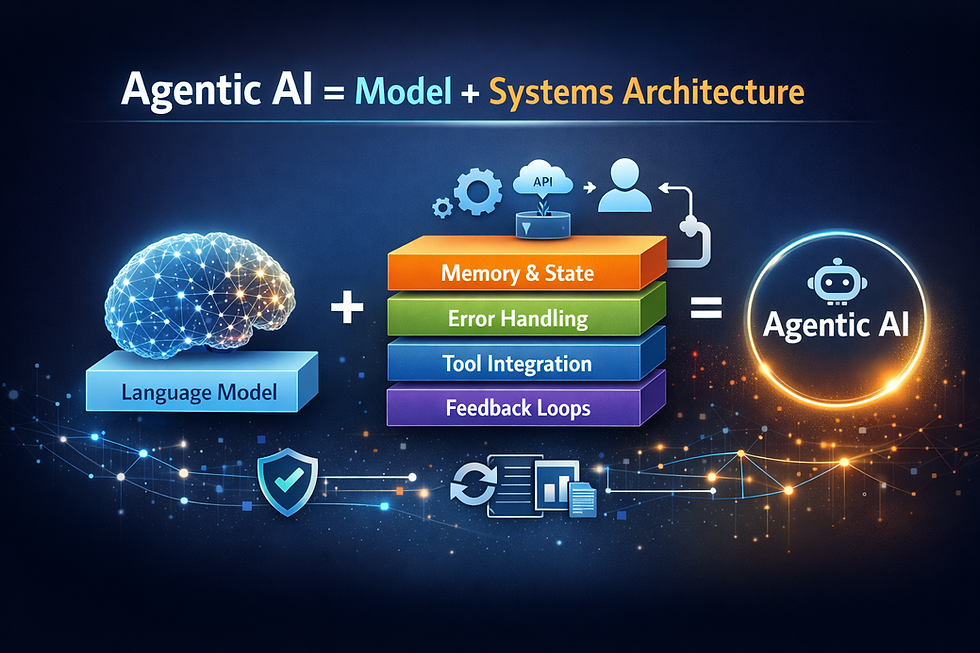

In my previous post, I explored that agentic AI is as much a systems problem as it is model problem. Memory is one of the places where that argument becomes very real, very quickly.

When people talk about memory in agents, the framing is almost always taxonomic. Short-term versus long-term. Working memory versus episodic memory. Those distinctions make sense academically, but they haven’t been very useful to me when I’m trying to understand why a system behaved strangely in production.

The question I’ve found more useful is simpler: what problem is this piece of memory actually solving? I’m not sure that framing is fully right either, but it’s gotten me further than the taxonomy.

In practice, you end up with a few different concerns that keep showing up whether you planned for them or not. The first one you hit is the most obvious.

The most immediate layer is just conversational state. Whatever the agent needs to finish the task in front of it — the last few turns, intermediate tool outputs, the active goal. This usually sits close to the orchestration layer and disappears when the task ends. If it doesn’t, conversations start bleeding into each other in ways that are surprisingly hard to debug.

One layer out from that is what ends up functioning as session memory. A user walks away and comes back ten minutes later, or they’re configuring something across multiple turns. The system needs just enough continuity to avoid forcing them to start over. In practice this expires after a few hours or maybe a day. Getting it wrong is annoying but usually recoverable.

The messier problem shows up when you try to persist anything longer than that — when the system is supposed to actually learn something about a user and carry it forward. That’s where things started breaking for us.

A user was going through music recommendations with our assistant. Reacting to something they clearly didn’t like, they said something along the lines of “god, not jazz.” Sarcastic. The kind of throwaway line you’d say to a friend while scrolling through a bad playlist.

Our system embedded it, stored it, and interpreted it as a durable preference signal.

For weeks afterward, anything that touched jazz — even loosely related artists — got quietly filtered out of results.

We didn’t catch it through monitoring. Someone on the team noticed something odd while testing a music query that normally should have surfaced a few recommendations. Tracing it back took longer than it should have. We eventually found the original utterance buried in an interaction log from almost three weeks earlier.

Nothing in the retrieval pipeline was technically broken. The system had done exactly what it was designed to do.

The real problem was that we’d built a system that gave every utterance the same weight. A confirmed preference that appeared across multiple sessions had the same authority as a sarcastic aside made once in a moment of frustration.

Humans don’t interpret language that way. When a friend says “I hate jazz” while reacting to one bad song, you don’t permanently update your mental model of their taste. You understand that some statements are expressions, not declarations.

Our agent didn’t know the difference.

That pushed us to rethink how memory writes worked at all.

Instead of automatically promoting conversational content into persistent user memory, we started treating memory writes as deliberate events with conditions attached. A preference might need to appear across multiple sessions. Or the user might need to act on it behaviorally — selecting something consistent with what they said. Corrections and follow-through started carrying more weight than single sentences.

If those signals weren’t present, the information simply aged out with the session.

At first this felt wrong. It felt like we were throwing away useful signal. In practice, it mostly filtered out noise. Agent behavior became noticeably more stable after that change — not dramatically different in any single interaction, but fewer of those “why did it do that?” moments in the long tail.

There’s also a technical wrinkle that shows up once systems start getting more complex.

Vector memory is not a database. Treating it like one eventually causes problems.

Embeddings are very good at fuzzy recall. They’re useful when you want to surface semantically related information from past interactions. But the moment you need to store something precise — a home city, a dietary restriction, a preferred delivery window — you want structure rather than approximation.

We saw agents confidently surface the wrong preference simply because a semantically similar piece of text had shown up in the retrieval set. The fix wasn’t a better embedding model.

The fix was separating things that probably shouldn’t have been treated as the same problem in the first place.

Structured attributes and factual data ended up living in one place. Past conversational experience and fuzzy recall lived somewhere else. Recent conversational context was handled separately from both.

I’m not even sure those are the right words for the split. At the time we didn’t have a name for it. We just needed the system to stop mixing signals that clearly had very different meanings.

Most production systems eventually converge on some version of this, whether they design for it upfront or discover the need after something goes wrong.

Latency quietly shapes these decisions too.

Every retrieval step adds time. Every additional chunk of context increases token count and the chance the model attends to the wrong thing.

In one system we worked on, memory retrieval ended up consuming more wall-clock time than model inference. Not because the retrieval operation itself was slow, but because it sat on the critical path of every request. Tiering the memory layers helped — frequently accessed signals stayed in low-latency stores while colder information lived elsewhere and was only pulled when needed.

A lot of agent architecture ends up rediscovering ideas from systems that have been around for decades.

The part I think gets the least attention, though, is memory that has nothing to do with remembering users.

Production systems accumulate patterns about themselves. Certain tools fail under specific conditions. Some prompt structures reliably lead to retries. Some tasks almost always need clarification before anything useful can happen.

We started capturing those patterns and feeding them back into routing logic and training data.

One concrete example: we had a tool that consistently failed when the user’s query contained a relative date — things like “next Tuesday” or “last week.” The model kept calling the tool anyway. Once we recognized the pattern and handled that case earlier in the pipeline, an entire class of failures simply disappeared.

The model didn’t change. The system just learned to route around one of its weak spots.

That kind of operational learning ends up functioning as another form of memory. It’s just memory about the system itself rather than about any particular user.

If there’s one principle that seems to emerge from all of this, it’s that good agents forget aggressively.

Not because storage is expensive — although it is. And not just because retrieval adds latency — although that matters too. The deeper issue is signal quality. A system that records everything eventually ends up confused by its own history.

Before writing anything to durable memory, the real question probably isn’t “did this happen?” It’s whether the system should allow that moment to influence what comes next.

I’m still not sure we’ve fully figured out the right abstractions for this layer. The four concerns I described — conversational state, session continuity, user preferences, and system-level learning — aren’t a framework so much as a list of the places where we’ve been surprised. Someone else building a different kind of system might carve it up completely differently.

In my previous post I discussed that agentic AI is as much a systems problem as it is a model problem. Memory is one of the clearest examples of that. Models generate responses, but systems decide what gets remembered, what gets ignored, and what eventually shapes behavior. The difference between a demo agent and a production agent often lives in that layer — and most of the time, you only find out exactly where after something breaks.

Comments